A guide to understanding and implementing AI security

Security teams often deal with large volumes of alerts, logs, and signals. When investigations rely heavily on manual triage and static rules, important threats can be difficult to prioritize within that noise.

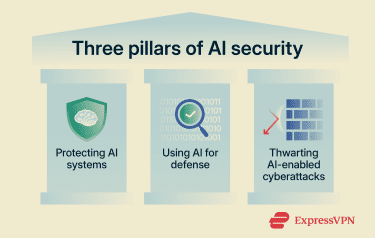

AI security is one approach organizations use to manage this complexity. Its scope extends beyond automation and typically includes using AI to strengthen defensive capabilities, protecting AI systems, and understanding how attackers may incorporate AI into their tactics.

This article explains these three pillars. It outlines how they fit into modern risk management practices, the practical roles they can play across the attack surface, and the governance considerations needed to use them responsibly.

What is AI security?

AI security is commonly broken into three related areas:

Protect AI systems

AI security covers protecting AI-integrated systems or applications from attack, misuse, and data leakage. It focuses on securing the AI models, training data, inference pipeline, infrastructure, and APIs.

Use AI to improve cybersecurity

AI security can mean the use of AI to improve cybersecurity and compliance by detecting threats, speeding up investigations, and supporting monitoring. This strengthens standard security processes like detection, prevention, and response.

Thwart AI-enabled cyberattacks

Another key focus of AI security is understanding AI-enabled cyberattacks, like deepfake scams, to effectively block and counter them. It also provides insights that can help improve the other two pillars.

Why AI security matters

Security teams face a shifting environment that introduces new pressures and risks that organizations need to manage.

Threat actors may leverage AI tools

Attackers could use AI to increase the speed and scale of their campaigns. With generative AI tools, criminals could draft convincing phishing emails, write malicious code, and create clone voices and deepfakes in minutes. They use these techniques to support social engineering attempts.

Security data volume and complexity are rising

Modern environments generate massive amounts of activity data from endpoints, cloud applications, and networks, such as logs and event records. Security operations centers (SOCs) receive thousands of alerts daily, creating a volume that is difficult to manage.

This problem is compounded by a shortage of skilled security staff, leading to burnout and alert fatigue. When analysts can’t keep up with the volume of raw logs and signals, threats may go unnoticed.

Regulatory and compliance expectations are increasing

Regulators and standards bodies now expect organizations to govern the use of AI rigorously. Frameworks from groups like the National Institute of Standards and Technology (NIST) recommend that companies track AI risks, keep an inventory of models and key documentation, and maintain audit-ready records for reviews.

Organizations that fail to control their AI systems may face legal penalties, failed audits, and reputational damage. The demand for transparency means security teams must account for the models and datasets they use.

AI introduces new vulnerabilities

AI security matters because AI systems can change how data moves, how decisions are made, and how attackers can influence those decisions.

When teams track where AI is used, limit what data models can access, test inputs for manipulation, and keep clear records for audits, they reduce the chance that an AI feature becomes a path to a breach, a bad automated decision, or a failed compliance review.

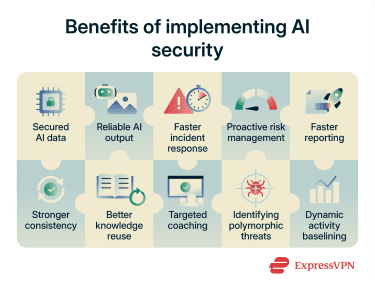

Benefits of implementing AI security

AI security is changing how defenders handle activity across their environments. Here are some of the ways implementing the three focus areas of AI security can help:

Benefits of protecting the AI system

- Secured AI data: Monitoring and flagging the exposure of sensitive training data or tracking who accesses your AI models helps protect the integrity of the data.

- Reliable AI output: Spotting slow changes in AI’s performance before they turn into real gaps can help prevent it from making incorrect decisions and maintain accuracy.

Benefits of using AI for cybersecurity

- Faster incident response: Using AI for cybersecurity could speed up response by sorting alerts and suggesting specific next steps. Instead of manually reviewing dozens of separate logs, analysts see grouped events. It can also help block attacks more quickly, such as isolating affected endpoints.

- Proactive risk management: AI analyzes logs and configurations to identify exposed services or gaps in network segmentation. It flags when security settings drift from the approved policy (e.g., a firewall port opened by mistake), which can help improve your security posture.

- Faster and simplified reporting: Cybersecurity AI tools can draft incident timelines and manage evidence, which reduces manual copy-and-paste errors.

- Stronger consistency: AI evaluates alerts using the same criteria each time. This helps ensure the initial analysis is consistent regardless of which analyst is on shift.

- Better knowledge reuse: Analysts can review similar past incidents and successful playbooks (predefined sets of actions) while they work with the help of AI. This allows the team to apply solutions that worked previously, so they don’t have to solve the same problem twice.

- Targeted coaching: AI can highlight exactly where a team member missed a step or followed the wrong process, helping managers focus training where it’s needed most.

Benefits of thwarting AI-powered attacks

- Identifying unknown and polymorphic threats: Traditional rules often fail to spot attacks that constantly change their code or move slowly to avoid triggering alarms. AI and behavior-based detection can help quickly detect and stop these threats.

- Dynamic activity baselining: AI models can be taught how users normally interact with apps and flag subtle differences. This catches insider abuse, compromised accounts, or automated fraud bots that look legitimate to a standard firewall but behave slightly differently than a human.

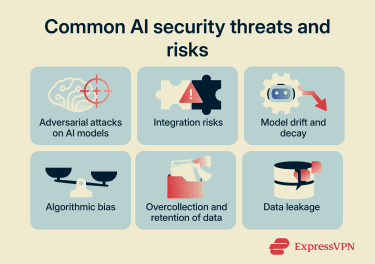

Key AI security risks and vulnerabilities

Here are some of the vulnerabilities that affect AI security, particularly in the focus areas dedicated to protecting AI systems and using AI as a cybersecurity tool.

Adversarial attacks on AI models

Adversarial attacks occur when attackers create inputs specifically designed to trick a model. In a security context, this means formatting a malicious log entry, email, or network packet so that it looks harmless to the AI.

Examples include:

- Evasion attacks: Attackers subtly alter malicious code or phishing emails to bypass detection. The changes may be invisible to humans but cause the AI model to classify the threat as safe.

- Data poisoning: By altering data and feeding the system bad information, attackers teach the model to ignore specific malicious behaviors in the future.

- Model inversion: Attackers query a model repeatedly to analyze its outputs. By studying these responses, they can reverse engineer the model to infer or reconstruct some of the sensitive data it was trained on.

- Prompt injection: Attackers craft inputs that manipulate the instructions given to an AI assistant. The malicious prompt attempts to override the system’s intended rules so the model reveals sensitive information, ignores safeguards, or performs unintended actions.

Integration risks

If a vendor updates a library or API that your AI tool relies on, it can change how your security model processes data. A bad update could break detection logic or stop logging altogether without warning.

Integrations sometimes require high-level access privileges to function. If an attacker compromises a third-party connector or steals an API token, they can gain broad access to the organization’s environment.

Model drift and decay

AI models lose accuracy over time because the environment they monitor changes. This phenomenon is known as drift:

- Data drift: This happens when the input data changes. For example, a company introduces new device types that the model has never seen, confusing its analysis.

- Concept drift: This happens when the definition of "malicious" changes. For example, attackers invent a new fraud technique that the model was not trained to recognize.

Both types of drift cause the model to miss real threats (false negatives) or flood analysts with useless alerts (false positives).

Algorithmic bias

Security models can develop biases based on the data they were trained on. This leads to unfair decisions that affect legitimate users. For example, a fraud detection model might flag transactions from a specific region or device type as "high risk" simply because its training data was skewed.

This results in valid customers facing transaction holds or account lockouts. If the model cannot explain why it flagged a specific user, the security team can’t defend the decision during an audit.

Overcollection and retention of data

To detect threats, AI security tools often process sensitive data from logs, emails, tickets, and chat. Storing data for long periods raises data privacy concerns, as it becomes an attractive target for attackers.

Data leakage

AI systems can expose sensitive information if access controls or retrieval systems are misconfigured. For example, an assistant might retrieve data from the wrong incident record or return information from a dataset the user should not be able to access.

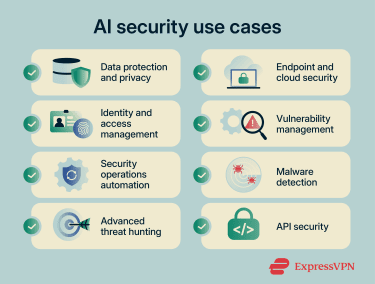

AI security use cases

There are many ways that AI-driven tools can work together to improve cybersecurity, protect AI systems, and thwart AI-enabled attacks. Here are some common examples:

1. Data protection and privacy

AI supports data loss prevention (DLP) by finding and labeling sensitive data, then monitoring its movement across AI models, cloud storage, Software-as-a-Service (SaaS) apps, and endpoints. Traditional DLP tools may rely on simple keyword matching, which only flags files containing exact text strings.

AI tools use machine learning to understand context, allowing them to spot personal data patterns and contextual signs. They also detect exposed secrets in code, such as passwords or API keys hidden inside software scripts.

2. Endpoint and cloud security

Instead of reviewing raw events, analysts get grouped alerts and risk scores with AI-powered extended detection and response (XDR). In the cloud where AI models run, anomaly detection flags unusual storage access, unexpected API calls, or workloads that appear to be cryptomining.

3. Identity and access management (IAM)

IAM systems support risk-based access control by scoring model sign-ins based on context, such as device fingerprinting, location, and session behavior. Low-risk sessions follow standard controls, while higher-risk sessions can trigger extra checks. This helps catch identity-driven attacks that simple rules miss, such as impossible travel (logging in from two far-apart locations instantly) or unusual admin actions.

4. Vulnerability management

AI helps teams prioritize the most critical vulnerabilities by ranking them based on real-world risk. Many platforms combine scanner results with business impact data to identify flaws that are actively being exploited. Used well, this shortens the gap between discovery and remediation for AI code and pipeline issues that have the clearest path to compromise.

5. Security operations automation

AI supports automation in the SOC by grouping related alerts and suggesting next checks. In some setups, the automation platform triggers a playbook to isolate endpoints, disable accounts, or block malicious IP addresses. This accelerates containment while maintaining a record of the system’s actions.

6. Malware detection

Malware detection can leverage AI to help classify new samples and spot suspicious behavior, such as ransomware activity. Teams use those signals to respond faster when attackers change tactics (e.g., use polymorphic malware) during an ongoing attack.

7. Advanced threat hunting

Threat hunting involves searching for rare attacker activity within large log sets, even in AI systems. Machine learning can cluster similar behavior and rank unusual entities. Some XDR tools map related identity, endpoint, and network activity into a single view to help threat hunters move from a broad search to a focused set of hypotheses they can test against evidence.

8. API security

APIs connected to AI models can expose data and business logic, making them targets for attackers probing for weak authentication. AI anomaly detection can learn baseline request patterns and flag unusual sequences or sudden spikes in error rates that suggest abuse. This works best when paired with strong identity controls for service accounts.

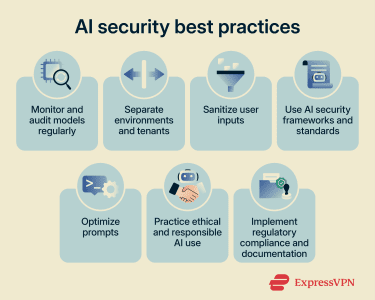

AI security best practices

AI security works best when it sits inside clear governance, tested models, and well-designed deployments. The practices below give teams a starting point for building AI security risk mitigation strategies into day-to-day operations.

Monitor and audit models regularly

AI models used in security need ongoing checks. Teams should track performance over time, test for model drift, and review outputs for false positives. Ongoing work on model governance focuses on continuous testing using new data samples and red teaming.

Regular audits verify both accuracy and system integrity, reducing the risk of AI model degradation.

Separate environments and tenants

Teams should separate environments for development, testing, and production. They must also restrict who can change models or prompts and use strong identity controls for all admin access.

In shared platforms, tenant isolation ensures that one customer’s data, prompts, and models stay separate from others. This limits the blast radius if a single component is compromised.

Sanitize user inputs

Input sanitization means checking and cleaning user-provided text, code, or data before the system processes it. For AI security, that includes filtering prompts for injection attacks, stripping dangerous characters in code, and blocking access patterns that aim to exploit tools connected to a model. Combining input validation with output checks prevents a single malicious prompt from triggering broad changes across systems.

Use AI security frameworks and standards

Organizations get better results when they anchor AI security to recognized frameworks. These guides help teams map AI risks to controls, including supply chain security and mitigation strategies. Using established standards helps security and compliance teams speak the same language and track AI model vulnerabilities alongside other risks.

Optimize prompts

When security teams use large language models (LLMs) for analysis or investigations, effective prompt design is a security control. Clear prompts define the scope, data sources, and allowed actions, reducing the risk of hallucinations (incorrect outputs). Structured prompts and templates for common tasks improve reliability and make model outputs easier to review and log for audit.

Practice ethical and responsible AI use

Ethical use of AI links directly to trust and compliance. Teams should review AI use cases for fairness and transparency, particularly when models influence monitoring or access decisions. This includes implementing detection for algorithmic bias and maintaining human oversight for high-impact actions. Giving users ways to review automated decisions helps keep monitoring aligned with legal duties.

Implement regulatory compliance and documentation

AI security work should plug into existing governance processes. That includes keeping records of AI systems in use, their training data sources, known vulnerabilities, and evaluation results. Documentation shows regulators and partners how threats are identified and managed, ensuring that AI logs and model cards are part of regular audit packs.

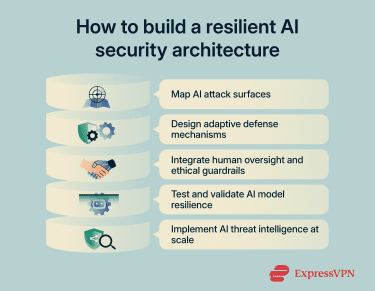

How to build a resilient AI security architecture

Creating resilient AI security supports the integrity of AI systems across data, models, and operations. This approach links security work to day-to-day monitoring, so AI risks remain visible and manageable.

Map AI attack surfaces across the lifecycle

A good starting point is to map where AI touches your environment. That means tracing the lifecycle from data collection and feature engineering (how raw data is turned into inputs a model can use) through training, deployment, and day-to-day use. Risk management guidance describes this as mapping attack surfaces across data inputs, models, inference endpoints, and the platforms that host them.

In practice, the map should cover secure data pipelines, training data manipulation risks, and security issues around third-party models. This assessment should also account for synthetic data risks, where artificially generated datasets might introduce hidden biases or vulnerabilities into the training process.

Design adaptive defense mechanisms for intelligent systems

Resilient architectures treat AI as part of a feedback loop instead of a fixed rule engine. Detection models watch behavior across endpoints, APIs, and identities, then feed high-confidence findings into automated playbooks. These playbooks can isolate affected systems or limit user access to stop an attack from spreading.

On the model side, engineers work on robustness by training models with adversarial examples, altered inputs designed to fool AI models. This helps the system resist new attacks instead of focusing on the old ones. Using these techniques helps you react as attackers change tactics, rather than relying on a one-time tuning exercise.

Integrate human oversight and ethical guardrails

Even with automation, humans must decide what’s acceptable. Security architects should keep people involved in high-impact decisions, such as blocking a user, escalating fraud cases, or handing evidence to law enforcement. Governance standards require clear roles, audit trails, and documented reasoning when AI systems influence people’s rights.

In AI security, that translates into review queues for AI-generated findings and controls that let analysts override AI decisions. These guardrails help manage risks in analyst assistants and keep operations aligned with ethics and law.

Test and validate AI model resilience

Architectures that rely on AI need security testing, not just accuracy checks. Teams use red teaming and scenario-based exercises to see how models behave under pressure.

Recent work on adversarial evaluation treats strong attack suites as a standard part of model validation, so robustness is measured and tracked instead of assumed. For AI security tools, this testing should happen both before deployment and during live operation, with clear rules for when a model needs to be taken offline.

Implement AI threat intelligence at scale

Traditional threat intelligence focuses on IP addresses, domains, and malware files. AI threat intelligence adds indicators related to AI environments, such as malicious model files or specific prompts used to break chatbots.

Security programs now track threats to models, data pipelines, and cloud infrastructure, feeding those insights into detection rules. Architectures that use this intelligence can adjust controls quickly when new security threats appear, such as tools that target unauthorized model access or abuse APIs.

FAQ: Common questions about AI security

What is the key focus of AI security?

How is AI used in cybersecurity?

What are adversarial AI attacks?

What is the biggest risk of AI security?

What is the difference between AI security and AI safety?

How do organizations ensure AI security and compliance?

Take the first step to protect yourself online. Try ExpressVPN risk-free.

Get ExpressVPN